DRUPAL

SUMMARY

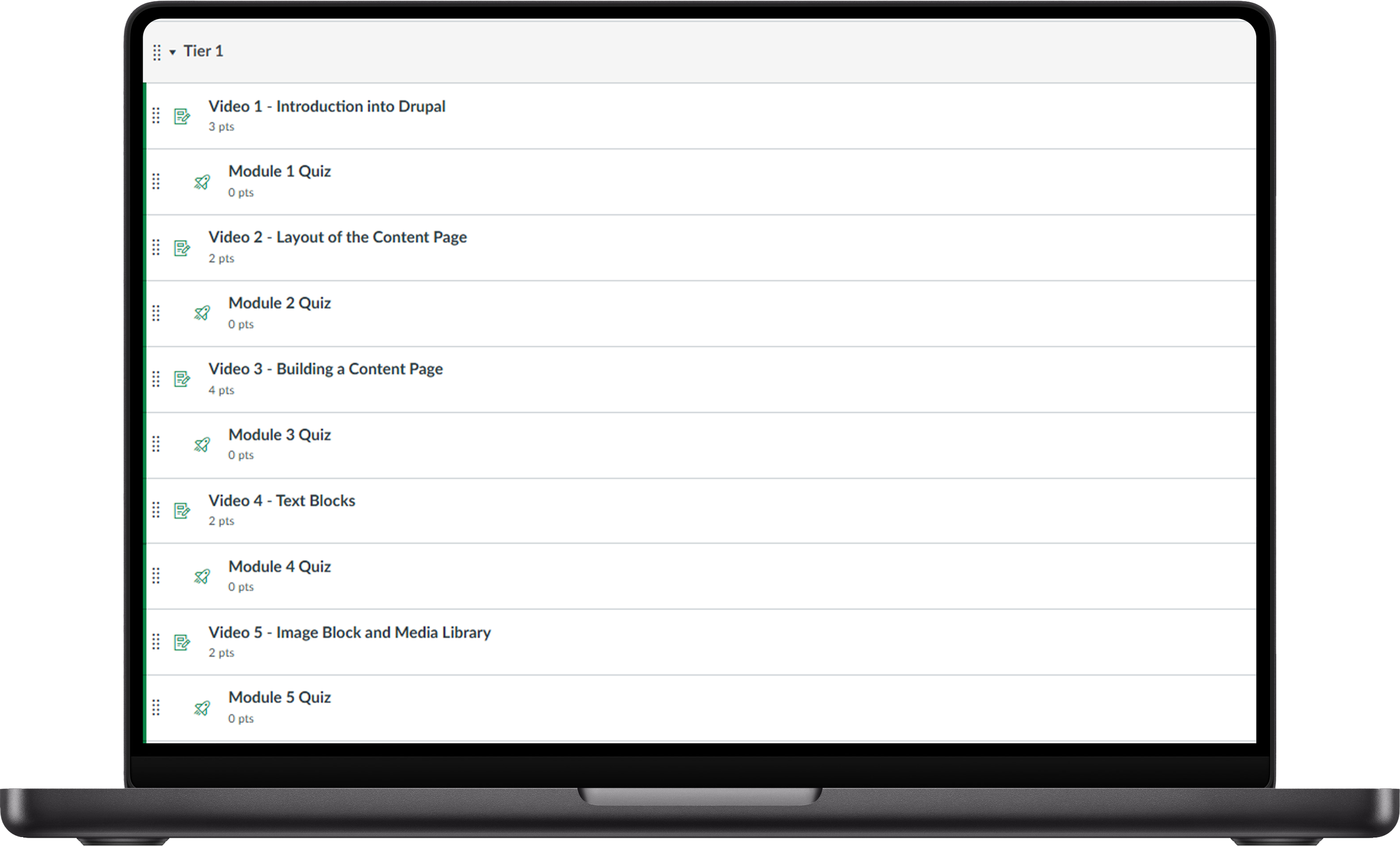

For this project, I was presented with the opportunity to redesign a previously created training course for a program called “Drupal”. This is a web development software that the University uses to design their program pages and was an integral tool in the responsibilities of the IT department. I had no prior experience with this software, but with the help of IT SMEs and through my training design and program management expertise, we were able to rebuild this training into an asynchronous Canvas course that the University still uses today. The program contained 16 total lessons, complete with videos, supporting documents, quizzes after each lesson and a final survey to gauge how users felt about the new training for continuous improvement.

THE PROBLEM

During my first consultation with the IT Department leader, we identified a couple key issues that needed to be addressed. First the overall program was disorganized. There was no clear instructional sequence for the videos, nor any way to differentiate the difficulty level of the knowledge and skills presented before watching them.

Second, the available videos were poorly edited and lacked a cohesive instructional approach. Narrators frequently jumped between topics within a single video, the video quality was poor, and there were no titles to inform participants of the topics covered. As a result, users had to watch the videos blindly to determine the subject matter.

Third, the overall experience using this program was poor amongst almost all participants. New users often failed to retain the necessary KSAs to begin using the software after completing the training. Advanced users frequently abandoned the training, believing it to be a waste of time. Those who did complete the training reported that the poor visual quality was too distracting to gain any valuable insights.

Finally, the lessons were not housed in an LMS. Instead, they were stored in a shared cloud drive for the IT department, making the viewing process cumbersome. Participants had to manually sort through the videos to determine the correct sequence, and access had to be granted individually for each participant.

THE SOLUTION

To address the concerns identified during the consultation, I began by administering a survey to individuals who had completed the training program over a two-week period. The purpose of this survey was to validate the issues raised during the consultation and gather more detailed feedback from users. It included questions about aspects of the training participants liked, areas that needed improvement, and any additional features they would like to see in the new program. I also collected demographic data, such as age, university status, and the recency of training participation, to better understand the context of the responses. While the survey results largely confirmed the concerns raised in the consultation, one key insight emerged from more experienced users: a desire for an “advanced level” course. This realization led me to introduce a tiered learning path, allowing beginners to progressively build their skills while enabling advanced users to bypass foundational content and focus on more complex topics.

Following the survey, I held two workshop sessions with IT subject matter experts (SMEs) to define the key knowledge, skills, and abilities (KSAs) necessary for both beginner and advanced users. Together, we developed a project plan focused on ensuring that each lesson would build upon the last in a logical progression. This approach was intended to resolve the issue of content fragmentation in the previous training program by creating a coherent structure that reinforced essential concepts while gradually introducing more advanced tasks.

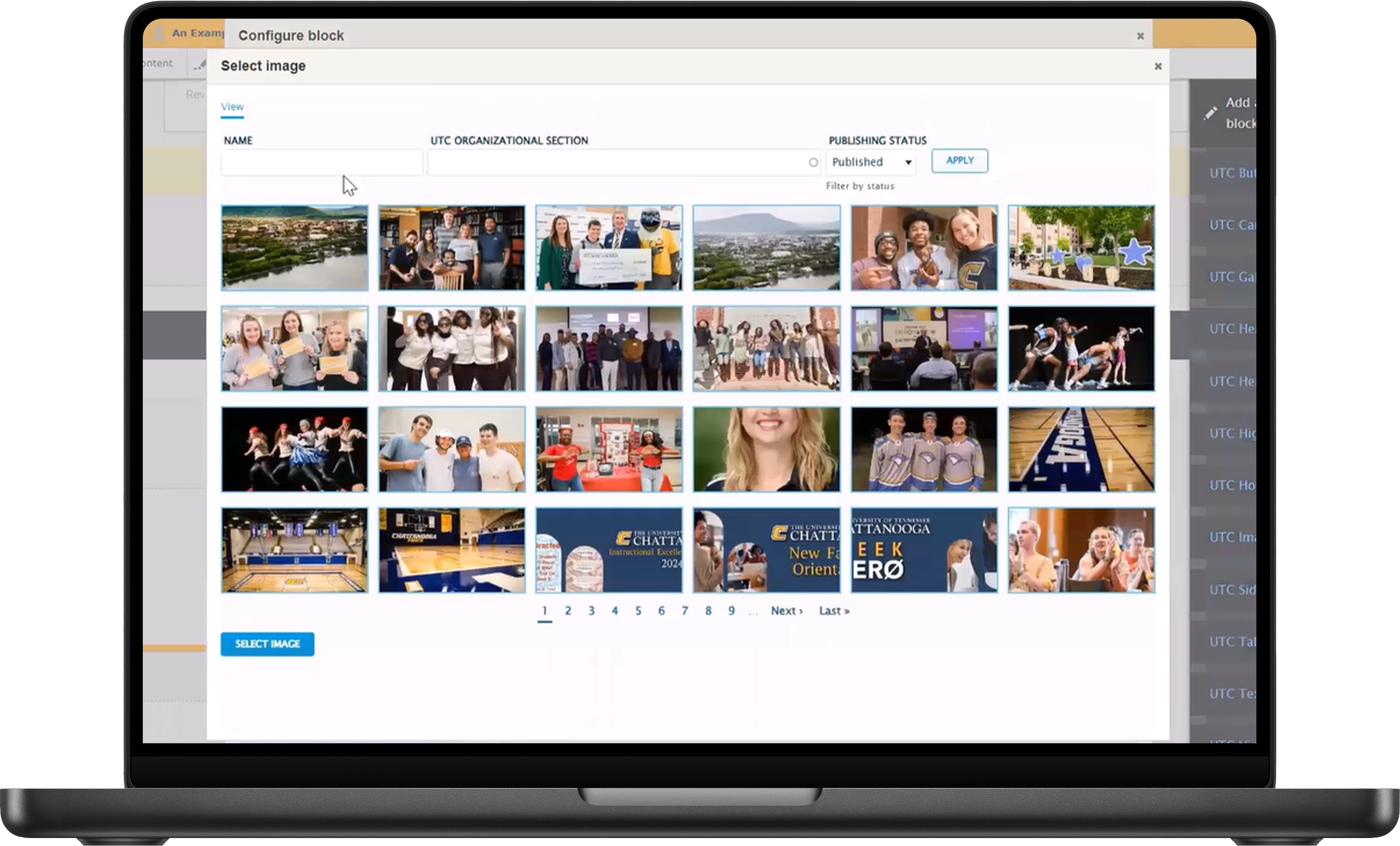

The curriculum was designed to start with foundational concepts such as software terminology, interface navigation, and institutional usage policies. Each subsequent lesson covered core software functions, such as text and image blocks, before advancing to more complex topics. This structured approach ensured that learning objectives were clear and relevant, resulting in a comprehensive lesson plan and development timeline that guided the overall progression of the course.

In terms of instructional format, I prioritized consistency, improved production quality, and Section 508 compliance. The final format consisted of instructional videos, quizzes, and supporting documentation, which provided further details on each topic. The videos were structured to introduce the lesson’s objectives, deliver concise content, and end with a professional outro. To maintain learner engagement without overwhelming them, each video was kept within a 7-10 minute range.

To enhance the production quality and accessibility of the lessons, I sourced animated intros and outros for each video. Using tools like Camtasia and Audacity, I improved video clarity, audio quality, and added relevant transitions, animations, and background music where applicable. I also ensured that all videos met Section 508 compliance by adding captions, which guaranteed accessibility for all learners. Each lesson was accompanied by a script, which underwent a review process to ensure a clear, natural flow and overall accuracy.

The production of each video lesson, including scripting, shooting, editing, and creating supporting documents and quizzes, took 2-3 days to complete. This timeline allowed for the careful development of high-quality content that was both engaging and informative.

Finally, to evaluate the effectiveness of the training and ensure continuous improvement, I applied Kirkpatrick’s Model of Training Evaluation (Kirkpatrick, 1996). This included assessments during the training itself and a post-training survey to measure learner engagement, knowledge retention, and overall satisfaction with the course.

THE PROCESS

SME CREATION OF MOCK VIDEO

The process begins with an IT SME creating a "mock video." In this step, the SME records themselves explaining the key aspects of the upcoming lesson, such as "text blocks." The goal is to capture all necessary details from the SME's perspective, which will later form the foundation for the script.

SCRIPT DRAFTING FROM MOCK VIDEO

Once I receive the mock video, I carefully review it and extract the essential elements and chronological steps presented by the SME. I then draft a script that ensures a logical, natural flow of content. The script also includes cues for the narrator to follow when filming the final video.

LEADERSHIP REVIEW OF SCRIPT

The draft script is sent to the leadership team for final review. This feedback ensures that the script aligns with the overall training goals and that the content is accurate and clear before moving forward to the recording phase.

AUDIO RECORDING BY NARRATOR

With the script finalized, the narrator records their audio, reading directly from the script. I emphasize the importance of clear annunciation and long pauses between sections, as this makes the later editing process more manageable by allowing easy cutting of pauses without disrupting the flow of the video.

VIDEO RECORDING

I then proceed with recording the video, where I screen-record myself interacting with the software and demonstrating the topic outlined in the script. This video is captured as an MP4 file and will be later synchronized with the audio track recorded in Step 4.

VIDEO EDITING IN CAMTASIA

Both the video and audio files are imported into Camtasia, where I edit the content. This includes adding intros, outros, title screens, transitions, animations, captions, and background music. I also enhance the video quality and saturation, as well as improve the audio clarity, to ensure a polished final product.

FINAL REVIEW AND APPROVAL

Once the video is edited, I download the final file and send it to the leadership team for their final approval. This step ensures that the video meets all quality and content standards before it is finalized.

QUIZ CREATION AND PREPARATION FOR NEXT LESSON

During the review process, I also draft a 5-question quiz for the lesson to assess learner comprehension. Additionally, I begin preparations for the next lesson video, ensuring a continuous flow of content development and review.

APPLICABLE THEORIES

I-O PSYCHOLOGY

In designing the training program, I applied key principles from Industrial-Organizational psychology to enhance learner engagement and organizational support. Perceived Organizational Support (POS) played a crucial role, as incorporating user feedback into the redesign signaled to employees that their learning experience was valued. Additionally, Cognitive Load Theory guided the structuring of content to reduce mental strain and improve knowledge retention. By integrating these theories, I ensured that the training was both user-centered and strategically aligned with organizational goals.

POS

Perceived Organizational Support (POS) refers to employees' belief that their organization values their contributions and cares about their well-being (Jex & Britt, 2014). Redesigning the training with user feedback signaled to employees that their learning experience was valued, reinforcing the organization's investment in their development. Conducting a pre-redesign survey ensured employees’ voices were heard, further strengthening POS by demonstrating that their needs and concerns were prioritized. Additionally, integrating accessibility features (e.g., Section 508 compliance, captioning, and enhanced video production) promoted inclusivity, ensuring all learners—regardless of ability—could fully engage with the material. Employees who perceive strong organizational support are more likely to be engaged, committed, and productive in their roles (Jex & Britt, 2014).

COGNITIVE LOAD THEORY

Cognitive Load Theory (Sweller, 2011) explains how the brain processes and retains information, emphasizing the need to manage cognitive demand for effective learning. The original training overwhelmed learners with unstructured content, making knowledge retention difficult. By implementing structured lesson sequencing, limiting video length to 7–10 minutes, and adopting a progressive skill-building approach, extraneous cognitive load was minimized. Additionally, incorporating recap sections, quizzes, and clearly defined learning objectives helped manage intrinsic cognitive load, ensuring information was presented in digestible segments, ultimately enhancing comprehension and retention.

ENGAGEMENT AND MOTIVATION

Engagement and Motivation are positively correlated with job performance (Jex & Britt, 2014). Highly engaged employees tend to go beyond their formal job roles, demonstrating extra-role behaviors such as proactive problem-solving, knowledge sharing, and team collaboration. In contrast, disengagement often leads to frustration, resentment, and increased turnover. By incorporating elements of career growth and professional development into the training program, I designed a system that not only enhanced motivation and engagement but also encouraged employees to view their roles as steppingstones rather than short-term obligations.

INSTUCTIONAL DESIGN

To create an effective and engaging training experience, I leveraged foundational instructional design models, including the ADDIE model (Branch, 2009), Gagné’s Nine Events of Instruction (Gagné et al., 1992), and Kirkpatrick’s Model of Training Evaluation (Kirkpatrick, 1996). The ADDIE framework provided a structured approach from analysis to evaluation, ensuring that each phase of the training was strategically developed. Gagné’s model guided lesson structuring to maximize engagement and retention, while Kirkpatrick’s model allowed for a comprehensive assessment of training effectiveness, measuring learner reactions, knowledge acquisition, behavior change, and overall results. By integrating these research-backed instructional strategies, I developed a training program that was accessible, interactive, and aligned with best practices in learning and development.

ADDIE MODEL (ANALYZE)

The first phase of the ADDIE model focuses on diagnosing key issues, understanding learner needs, and defining training objectives. To ensure the training addressed the right challenges, I conducted a thorough analysis, which included consultations with IT leadership to gain insight into organizational training goals and identify critical gaps, surveying users who previously completed the training to determine pain points, content deficiencies, and opportunities for improvement, and identifying accessibility concerns to ensure compliance with Section 508 and promote inclusivity.

My analysis identified major issues with the training program and gave me direction on how to design the new training, including:

A lack of structure.

Poor video quality.

An inefficient delivery method.

Users struggled to navigate content, retain key information, and stay engaged.

The need to design an advanced-tier course, along with a beginner course.

The target demographic would be a wide range of ages and backgrounds, thus the training had to be approachable for a generalized population.

ADDIE MODEL (DESIGN)

The design phase centered on structuring the training effectively by incorporating research-backed strategies and aligning content with essential KSAs (Knowledge, Skills, and Abilities). Key elements included:

LESSON STRUCTURING

Training was designed to progressively build knowledge, beginning with foundational concepts and advancing to more complex skills.

TIME CONSTRAINTS

Video lessons were capped at 7-10 minutes to enhance engagement and reduce cognitive overload.

INTERACTIVITY

Quizzes were embedded throughout the course to reinforce learning, assess retention, and provide instant feedback.

ACCESSIBILITY

Quizzes were embedded throughout the course to reinforce learning, assess retention, and provide instant feedback.

This phase involved creating and assembling the training materials, refining content with subject matter experts (SMEs), and enhancing multimedia elements to optimize learner engagement. Key development steps included:

ADDIE MODEL (DEVELOPMENT)

SCRIPT DEVELOPMENT

Training was designed to progressively build knowledge, beginning with foundational concepts and advancing to more complex skills.

MULTIMEDIA PRODUCTION

Video lessons were developed using tools like Camtasia and Audacity, incorporating animations, captions, and high-quality visuals to improve engagement.

CONTENT REFINEMENT

Iterative feedback from IT leadership was used to improve the clarity and effectiveness of the training materials.

ADDIE MODEL (EVALUATION)

To measure training effectiveness and identify areas for continuous improvement, the overall evaluation method used was the “Kirkpatrick Model of Training Evaluation” (Schmidt et al., 2009). The model breaks down evaluation into four phases: Reaction, Learning, Behavior and Results, with an added fifth being ROI.

REACTION

How did participants feel about the training?

To assess user reactions, a post-evaluation survey was administered at the end of the training. This survey gathered feedback on the effectiveness of the new format, engagement levels and overall satisfaction with the training, and usability improvements compared to previous training (e.g., ease of navigation, clarity of lessons, etc.) The survey also collected demographic data to analyze different user perspectives (e.g., beginner vs. advanced users, university status).

In summary, over 90% of the participants had positive reactions towards the new training. Addition, of the population who had originally requested an advanced level course be added, over 95% reported positive reactions. All groups highlighted the enjoyment of the training structure and the overall design being much more visually appealing and engaging.

LEARNING

Did participants acquire the intended knowledge and skills?

Each lesson included a quiz to assess immediate comprehension of the material. Supporting documents and transcripts were provided to reinforce learning. At the end of the training course, a final exam (one for the beginner level and one for the advanced level) served as a summative assessment, ensuring that participants retained essential KSAs by the end of the course.

BEHAVIOR

Did participants apply the knowledge and skills in their work?

While direct long-term behavioral assessment wasn’t explicitly administered, the IT leadership back stating that the base level of knowledge of participants who had taken the training with no prior experience and the participants who took the training after participating in the previous training all had an increased base-level of KSAs that allowed them to perform essential tasks in Drupal shortly after completing the training.

This change in behavior can be attributed to the structuring of lessons in a progressive skill-building format, differentiating between beginner and advanced training to ensure that users could immediately apply relevant skills at their respective levels, and improving training quality, making it easier for participants to recall and use what they learned in real work scenarios.

RESULTS / ROI

Did the training lead to measurable improvements?

The results of the training evaluation suggest that the redesigned program was highly effective in enhancing user engagement, knowledge retention, and skill application. The overwhelmingly positive reactions from participants indicate that the structured, visually appealing format addressed prior concerns about clarity and engagement. The quiz and exam performance demonstrated that the tiered learning approach successfully reinforced comprehension for both beginner and advanced users.

Moreover, IT leadership’s observation of improved baseline proficiency among trainees suggests that the restructuring of content not only made the material more digestible but also facilitated immediate application in real-world tasks.

Regarding ROI, the program is still used by the organization to date.

GAGNÉ’S NINE EVENTS OF INSTRUCTION

Gagné’s model provides a structured approach to designing effective learning experiences, and I incorporated these principles into my training project as follows:

GAIN ATTENTION

I used animated intros, professional outros, and improved video quality to engage learners from the start.

INFORM LEARNERS OF OBJECTIVE

At the beginning of each video, I clearly stated the learning objectives to set expectations for learners.

STIMULATE RECALL OF PRIOR LEARNING

I structured lessons to build progressively on previous knowledge. For example, users first learned basic terminology before advancing to text blocks, image blocks, and more complex skills.

PRESENT THE CONTENT

I delivered training through a combination of videos, supporting documents, and quizzes, catering to different learning preferences.

PROVIDE LEARNING GUIDENCE

By incorporating structured scripts, ensuring Section 508 compliance, and using clear transitions, I created a smooth and digestible learning experience.

ELICIT PERFORMANCE

I integrated quizzes after each lesson, allowing learners to immediately apply their knowledge and assess their understanding.

PROVIDE FEEDBACK

Learners received immediate quiz results and structured lessons, enabling them to self-correct and reinforce key concepts.

ASSESS PERFORMANCE

I included a final survey to evaluate how well the training met learners’ needs and to gather feedback for continuous improvement.

ENHANCE RETENTION AND TRANSFER

By organizing content in a progressive skill-building format, I ensured that learners could retain information and apply it effectively in real-world scenarios.

RESOURCES

Branch, R. M., & SpringerLink (Online service). (2009). Instructional design: The ADDIE approach (1st.;1st; ed.). Boston, MA: Springer-Verlag US. doi:10.1007/978-0-387-09506-6

Gagné, R. M., Briggs, L. J., & Wager, W. W. (1992). Principles of instructional design (4th ed.). Forth Worth, TX: Harcourt Brace Jovanovich College Publishers.

Jex, S. M., & Britt, T. W. (2014). Organizational psychology: A scientist-practitioner approach. John Wiley & Sons.

Kirkpatrick, D. (1996). Revisiting Kirkpatrick’s four-level-model. Training & Development, 1, 54-57.

Sweller, J. (2011). Cognitive load theory. In J. P. Mestre & B. H. Ross (Eds.), The psychology of learning and motivation: Cognition in education (pp. 37–76). Elsevier Academic Press. https://doi.org/10.1016/B978-0-12-387691-1.00002-8